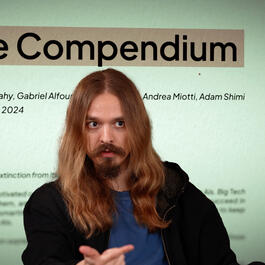

The Compendium - Connor Leahy and Gabriel Alfour

Connor Leahy and Gabriel Alfour, AI researchers from Conjecture and authors of "The Compendium," joinus for a critical discussion centered on Artificial Superintelligence (ASI) safety and governance. Drawing from their comprehensive analysis in "The Compendium," they articulate a stark warning about the existential risks inherent in uncontrolled AI development, framing it through the lens of "intelligence domination"—where a sufficiently advanced AI could subordinate humanity, much like humans dominate less intelligent species. SPONSOR MESSAGES: *** Tufa AI Labs is a brand new research lab in Zurich started by Benjamin Crouzier focussed on o-series style reasoning and AGI. They are hiring a Chief Engineer and ML engineers. Events in Zurich. Goto https://tufalabs.ai/ *** TRANSCRIPT + REFS + NOTES: https://www.dropbox.com/scl/fi/p86l75y4o2ii40df5t7no/Compendium.pdf?rlkey=tukczgf3flw133sr9rgss0pnj&dl=0 https://www.thecompendium.ai/ https://en.wikipedia.org/wiki/Connor_Leahy https://www.conjecture.dev/about https://substack.com/@gabecc TOC: 1. AI Intelligence and Safety Fundamentals [00:00:00] 1.1 Understanding Intelligence and AI Capabilities [00:06:20] 1.2 Emergence of Intelligence and Regulatory Challenges [00:10:18] 1.3 Human vs Animal Intelligence Debate [00:18:00] 1.4 AI Regulation and Risk Assessment Approaches [00:26:14] 1.5 Competing AI Development Ideologies 2. Economic and Social Impact [00:29:10] 2.1 Labor Market Disruption and Post-Scarcity Scenarios [00:32:40] 2.2 Institutional Frameworks and Tech Power Dynamics [00:37:40] 2.3 Ethical Frameworks and AI Governance Debates [00:40:52] 2.4 AI Alignment Evolution and Technical Challenges 3. Technical Governance Framework [00:55:07] 3.1 Three Levels of AI Safety: Alignment, Corrigibility, and Boundedness [00:55:30] 3.2 Challenges of AI System Corrigibility and Constitutional Models [00:57:35] 3.3 Limitations of Current Boundedness Approaches [00:59:11] 3.4 Abstract Governance Concepts and Policy Solutions 4. Democratic Implementation and Coordination [00:59:20] 4.1 Governance Design and Measurement Challenges [01:00:10] 4.2 Democratic Institutions and Experimental Governance [01:14:10] 4.3 Political Engagement and AI Safety Advocacy [01:25:30] 4.4 Practical AI Safety Measures and International Coordination CORE REFS: [00:01:45] The Compendium (2023), Leahy et al. https://pdf.thecompendium.ai/the_compendium.pdf [00:06:50] Geoffrey Hinton Leaves Google, BBC News https://www.bbc.com/news/world-us-canada-65452940 [00:10:00] ARC-AGI, Chollet https://arcprize.org/arc-agi [00:13:25] A Brief History of Intelligence, Bennett https://www.amazon.com/Brief-History-Intelligence-Humans-Breakthroughs/dp/0063286343 [00:25:35] Statement on AI Risk, Center for AI Safety https://www.safe.ai/work/statement-on-ai-risk [00:26:15] Machines of Love and Grace, Amodei https://darioamodei.com/machines-of-loving-grace [00:26:35] The Techno-Optimist Manifesto, Andreessen https://a16z.com/the-techno-optimist-manifesto/ [00:31:55] Techno-Feudalism, Varoufakis https://www.amazon.co.uk/Technofeudalism-Killed-Capitalism-Yanis-Varoufakis/dp/1847927270 [00:42:40] Introducing Superalignment, OpenAI https://openai.com/index/introducing-superalignment/ [00:47:20] Three Laws of Robotics, Asimov https://www.britannica.com/topic/Three-Laws-of-Robotics [00:50:00] Symbolic AI (GOFAI), Haugeland https://en.wikipedia.org/wiki/Symbolic_artificial_intelligence [00:52:30] Intent Alignment, Christiano https://www.alignmentforum.org/posts/HEZgGBZTpT4Bov7nH/mapping-the-conceptual-territory-in-ai-existential-safety [00:55:10] Large Language Model Alignment: A Survey, Jiang et al. http://arxiv.org/pdf/2309.15025 [00:55:40] Constitutional Checks and Balances, Bok https://plato.stanford.edu/entries/montesquieu/ <trunc, see PDF>

From "Machine Learning Street Talk (MLST)"

Comments

Add comment Feedback